Perception

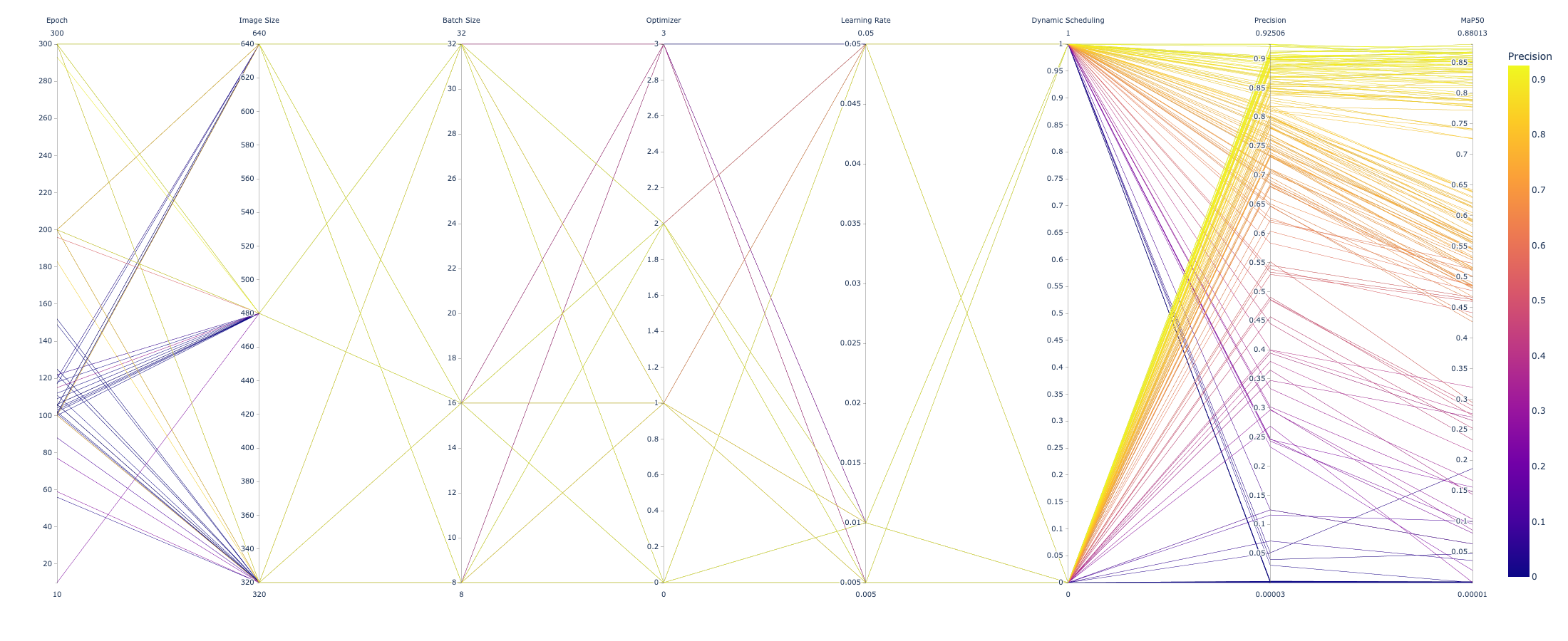

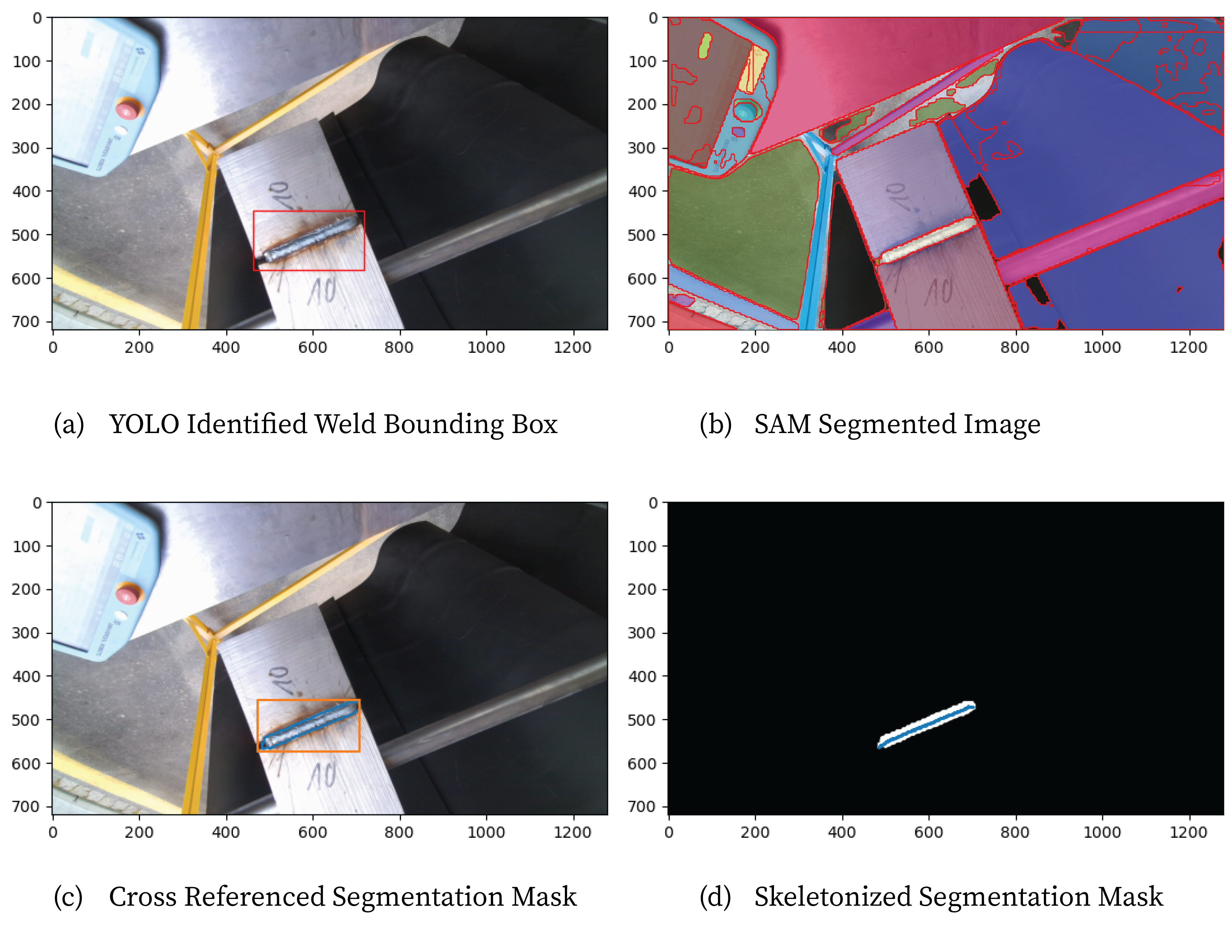

Strategies for finding corners, joints, connections using computer vision or deep learning. Simplest is to use a custom trained yolo model on a prompt like ‘weld’ and extract geometric information from the prediction.

With a sufficient weld detection model running, extracting a toolpath for linear beads is feasible with segmentation and skeletonization, offered by Meta’s SAM and scikitlearn.

This prediction in image space correlates to poses in 3D space, using an Azure Kinect and its SDK to map between.

For simple cut geometries, and reasonable poses for the robot, naive planning from the controller IK is sufficient, but for welds around corners or flanges, sampling or gradient-based planners are necessary.

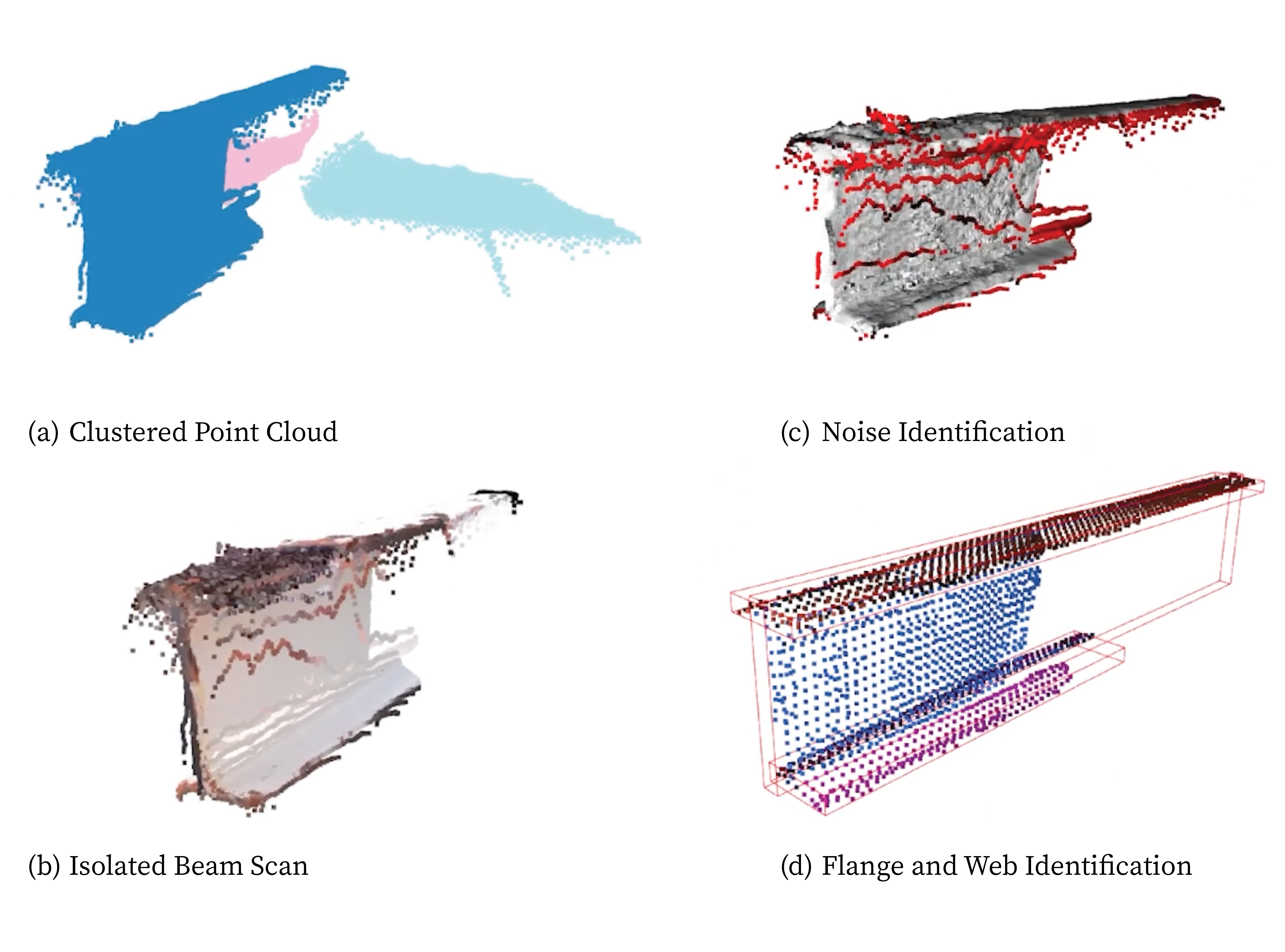

Here, point cloud processing beginning with DBSCAN clustering, noise removal, and multi-planar ransac to extract cut lines.

Shown here a gui demonstrating a ransac-esque cuboid strategy on a point cloud stream. The strategy is to sample several points, compute an oriented bounding box, then compare the box orientation with the points that reside inside it.

Many other strategies, shown below, finding corners with a Harris Detector, Hough Transforms and their intersections, SAM and intersecting regions, and their mapping to 3D in a pybullet environment.

This gui is developed to serve as an HMI, prototyping and debugging tool. Shown here is a scanning job, initiated from an arbitrary pose with a set of goal poses that orient the camera to survey the surrounding workspace. Realtime vision and inference can be made, but observations aren’t saved except at goal poses. Observations can be serialized to json, along with the scanning job toolpath. Jobs can be saved, loaded, simulated and executed.